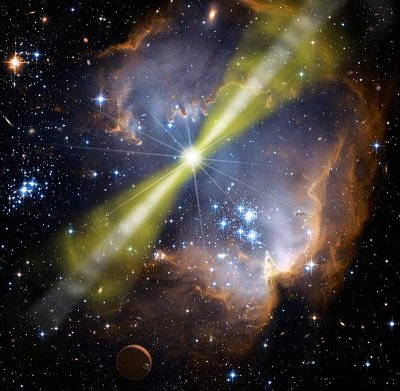

Gamma Ray Burst

Source: NASA/Swift/Mary Pat Hrybyk-Keith and John Jones

Imagine a powerful energy beam shooting across the universe and frying anything in its path. It may sound like something out of science fiction but it is actually real. Such an energy beam is called a gamma ray burst (GRB).

Most observed GRBs are believed to be produced during the gravitational collapse of a rapidly rotating massive star at the end of its life. Such a star, called a supernova, is much more massive than our sun. At the end of its life its nuclear fuel is used up and it can no longer maintain its size, so it begins to collapse into a black hole due to the immense gravitational force created by its extremely large mass. The combination of its extremely large mass collapsing into a black hole and the fact that it rapidly spins is what causes the GRB.

There is some uncertainty as to how exactly a GRB is generated, but the following explanation is a good basic summary.

As the star material collapses it feeds a swirling accretion disk which surrounds the black hole. Material from the accretion disk is then pulled into the black hole. And due to the conservation of angular momentum, caused by the rapid rotation of the star, two highly energetic narrow jets of star material are emitted in opposite directions, along the star rotation axis. The two jets are shown in the picture above. These jets are emitted in order to balance out the angular momentum loss of the star material as it falls into the black hole. The jets consist of a plasma of protons, positrons, and electrons, which are ejected at very close to the speed of light. The narrow jet angle can range from 2 to 20 degrees.

The highly energetic jets collide with the outer star material and, as a result of the collision, produce intense gamma radiation which is emitted in the same direction as the jets. Gamma radiation is the most powerful form of electromagnetic radiation.

The narrow jet angle of 2 to 20 degrees means that the gamma radiation energy can efficiently be carried over vast distances. This is because the energy is focused as a beam (similar to a laser) which diverges (spreads out) very gradually. This means that the beam energy becomes less concentrated the farther out it travels. But due to the enormity of the beam energy, it must be many thousands of light years away from a planet (like ours) for it to not pose a threat.

The duration of a GRB can range from several milliseconds to tens of minutes. It is the most powerful explosion of energy in the universe since the Big Bang, even greater than a supernova explosion. A typical gamma ray burst releases as much energy in a few seconds as the sun will in its entire 10 billion year lifetime. It is known that GRBs happen simultaneously with some supernova explosions. In other words, a massive star at the end of its life can simultaneously explode as a supernova and produce a GRB.

The fact that the energy of a GRB is so enormous and is transmitted in the form of a beam means that we can see it from very, very far away. A GRB can be observed even if it came from the edge of the observable universe, which is billions of light years away. In the gamma radiation part of the electromagnetic spectrum, they outshine the entire universe at the peak of their brightness.

You may be wondering where the immense energy from a GRB comes from. It comes from the cataclysmic collapse of star material into a black hole, which releases gravitational potential energy.

Only GRBs pointed directly at us can be detected (by satellites orbiting the earth). For every GRB that is detected there are hundreds more occurring in the observable universe, which point in other directions.

Not all GRBs are thought to be produced by the collapse of massive stars. Some may result from two neutron stars colliding. Two neutron stars, when caught in each others powerful gravitational pull, will gradually spiral towards each other. Upon impact they will merge and then violently collapse into a black hole, and the influx of material into the black hole produces an accretion disk from which two relativistic jets, and subsequently a GRB, is produced. A similarly produced GRB can also result from the merger of a black hole and neutron star.

It is possible that a gamma ray burst occurring within our Milky Way galaxy can cause mass extinction if it points towards earth. It is speculated that a GRB which is 10,000 light years or closer can devastate our planet.

If a GRB hit the earth from a star as close as our sun the earth would be completely vaporized. But if a GRB came from a star several thousand light years away it would still be devastating to the earth. The most dangerous consequence would come from destruction of the ozone layer. The intense gamma radiation would deplete the ozone layer and the earth would suddenly be exposed to harmful ultraviolet radiation from the sun which would in turn cause mass extinction of life on earth.

In fact, a GRB may have been responsible for the Ordovician–Silurian extinction event about 450 million years ago.

Fortunately, GRBs are extremely rare and only a few occur per galaxy per million years. The only ones we have observed so far have occurred in other distant galaxies.

We have no way of knowing ahead of time if a gamma ray burst is headed our way. By the time we see it, it will already be here. At best we can only scan for stars that may produce a GRB sometime in the future, and then (hoping for the best) determine whether or not the GRB would point towards earth. Fortunately, it is extremely unlikely to happen in our lifetime, but may happen sometime in the very distant future.

Return to Physics Essays page

Return to Real World Physics Problems home page

Free Newsletter

Subscribe to my free newsletter below. In it I explore physics ideas that seem like science fiction but could become reality in the distant future. I develop these ideas with the help of AI. I will send it out a few times a month.